Ols Matrix Form

Ols Matrix Form - 1.2 mean squared error at each data point, using the coe cients results in some error of. (k × 1) vector c such that xc = 0. The design matrix is the matrix of predictors/covariates in a regression: The matrix x is sometimes called the design matrix. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the number of. That is, no column is. \[ x = \begin{bmatrix} 1 & x_{11} & x_{12} & \dots &. We present here the main ols algebraic and finite sample results in matrix form:

That is, no column is. The matrix x is sometimes called the design matrix. We present here the main ols algebraic and finite sample results in matrix form: \[ x = \begin{bmatrix} 1 & x_{11} & x_{12} & \dots &. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the number of. (k × 1) vector c such that xc = 0. The design matrix is the matrix of predictors/covariates in a regression: 1.2 mean squared error at each data point, using the coe cients results in some error of.

Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the number of. 1.2 mean squared error at each data point, using the coe cients results in some error of. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. The design matrix is the matrix of predictors/covariates in a regression: \[ x = \begin{bmatrix} 1 & x_{11} & x_{12} & \dots &. The matrix x is sometimes called the design matrix. That is, no column is. We present here the main ols algebraic and finite sample results in matrix form: (k × 1) vector c such that xc = 0.

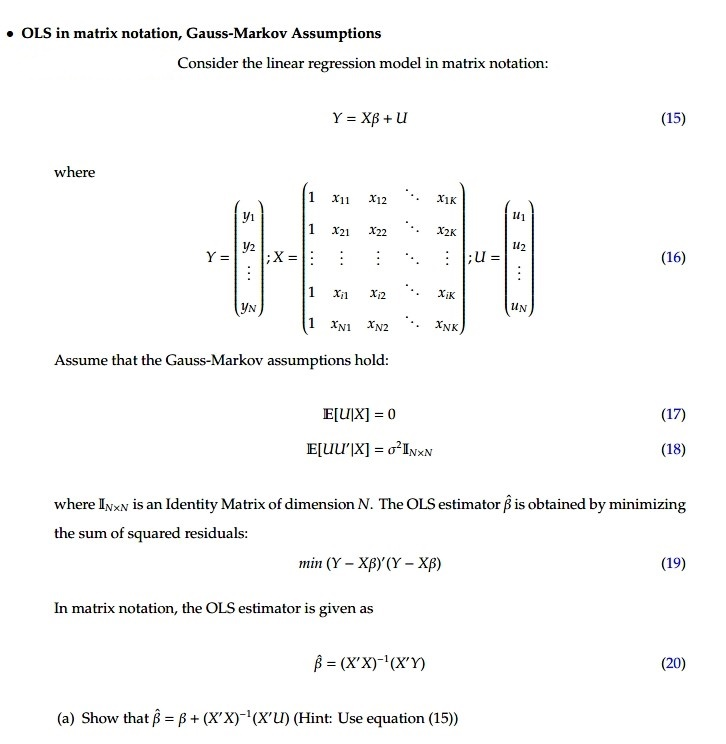

Solved OLS in matrix notation, GaussMarkov Assumptions

Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the number of. (k × 1) vector c such that xc = 0. The matrix x is sometimes called the design matrix. That is, no column is. \[ x = \begin{bmatrix} 1 & x_{11} &.

SOLUTION Ols matrix form Studypool

For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. That is, no column is. Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the.

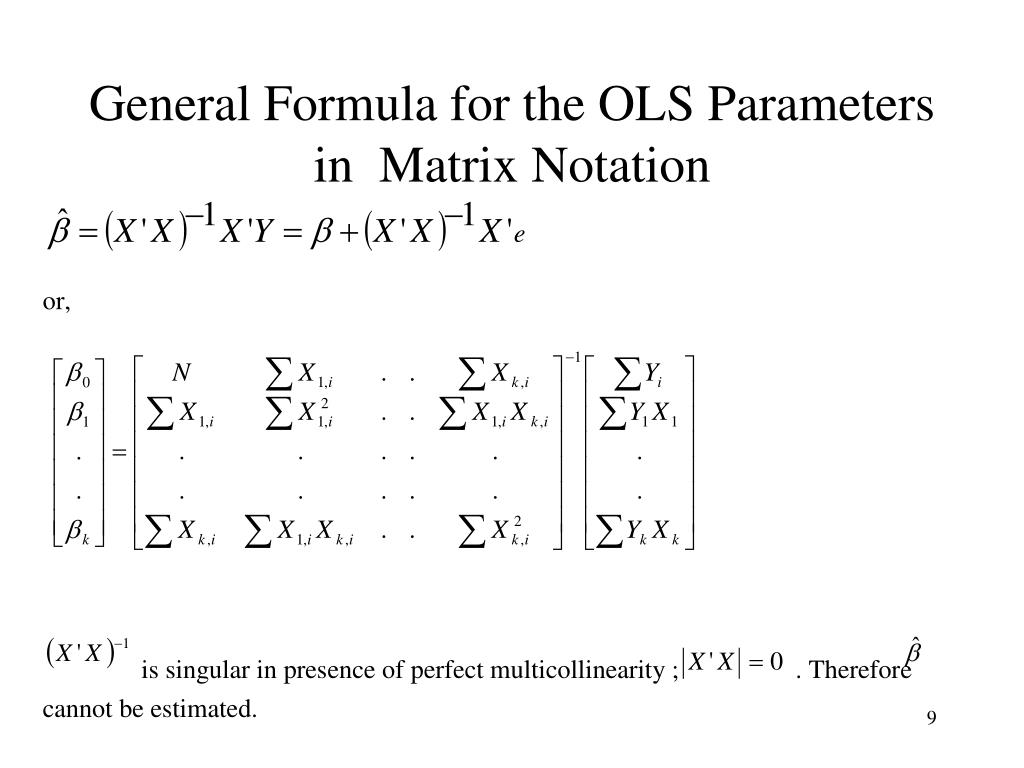

PPT Econometrics 1 PowerPoint Presentation, free download ID1274166

(k × 1) vector c such that xc = 0. That is, no column is. The matrix x is sometimes called the design matrix. 1.2 mean squared error at each data point, using the coe cients results in some error of. The design matrix is the matrix of predictors/covariates in a regression:

OLS in Matrix form sample question YouTube

(k × 1) vector c such that xc = 0. 1.2 mean squared error at each data point, using the coe cients results in some error of. That is, no column is. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j.

Vectors and Matrices Differentiation Mastering Calculus for

The matrix x is sometimes called the design matrix. 1.2 mean squared error at each data point, using the coe cients results in some error of. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. (k × 1) vector c.

SOLUTION Ols matrix form Studypool

For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. (k × 1) vector c such that xc = 0. Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n.

Ols in Matrix Form Ordinary Least Squares Matrix (Mathematics)

1.2 mean squared error at each data point, using the coe cients results in some error of. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. We present here the main ols algebraic and finite sample results in matrix form:.

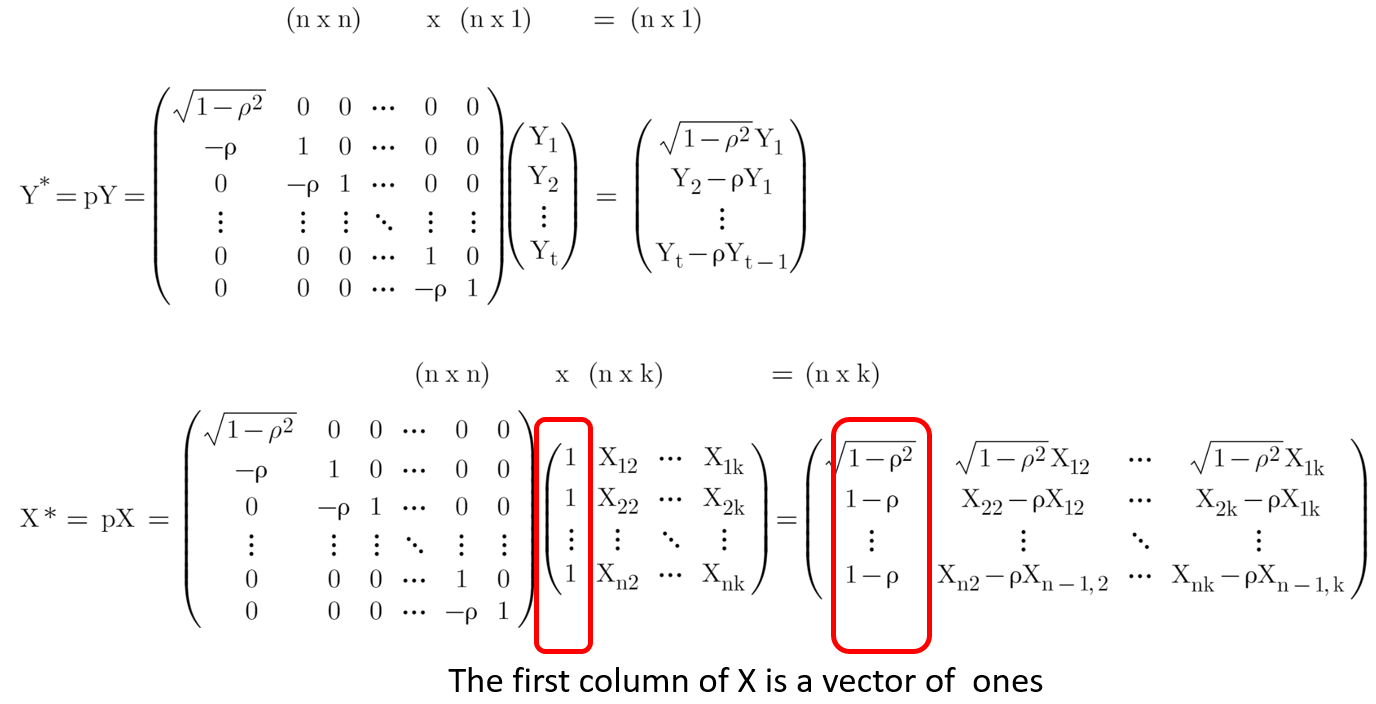

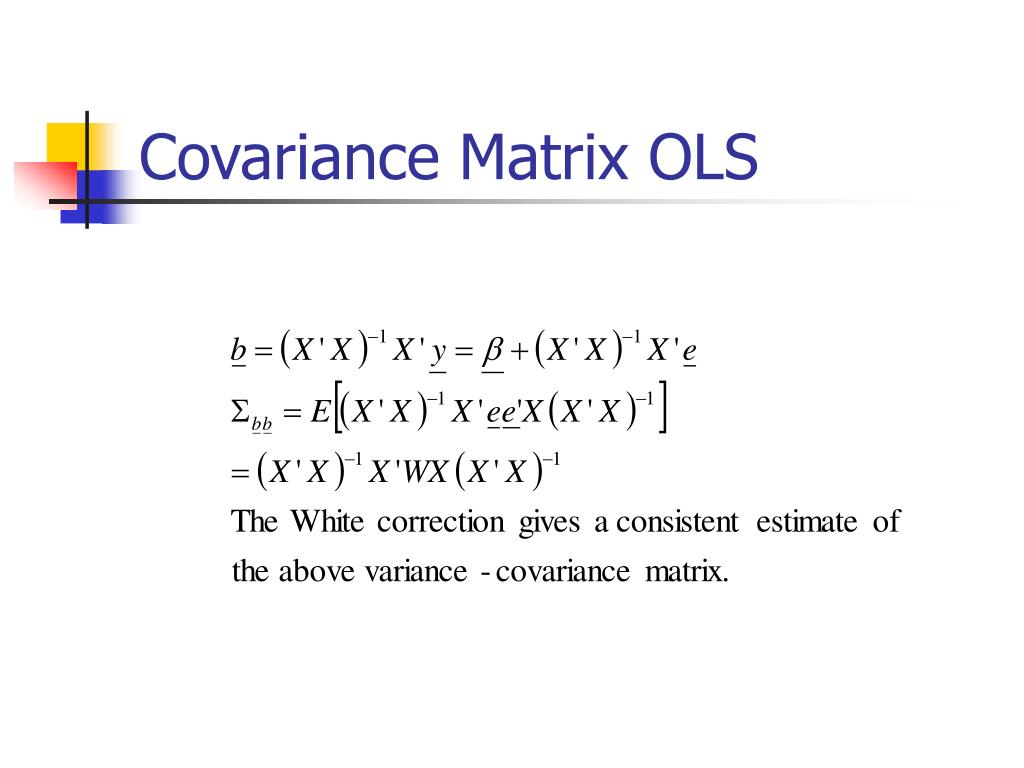

Linear Regression with OLS Heteroskedasticity and Autocorrelation by

The design matrix is the matrix of predictors/covariates in a regression: Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the number of. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n.

OLS in Matrix Form YouTube

Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the number of. The matrix x is sometimes called the design matrix. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with.

PPT Economics 310 PowerPoint Presentation, free download ID365091

That is, no column is. The matrix x is sometimes called the design matrix. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. The design matrix is the matrix of predictors/covariates in a regression: We present here the main ols.

1.2 Mean Squared Error At Each Data Point, Using The Coe Cients Results In Some Error Of.

Where y and e are column vectors of length n (the number of observations), x is a matrix of dimensions n by k (k is the number of. The design matrix is the matrix of predictors/covariates in a regression: We present here the main ols algebraic and finite sample results in matrix form: \[ x = \begin{bmatrix} 1 & x_{11} & x_{12} & \dots &.

(K × 1) Vector C Such That Xc = 0.

That is, no column is. For vector x, x0x = sum of squares of the elements of x (scalar) for vector x, xx0 = n ×n matrix with ijth element x ix j a. The matrix x is sometimes called the design matrix.